DPSP Global 2026 (London) was a useful checkpoint—not because it introduced a single “new thing,” but because it reinforced a broader shift already happening across utilities, EPCs, OEMs, and commissioning teams: in protection & automation, success is no longer defined by functional operation alone. Increasingly, the baseline expectation is evidence, repeatability, and traceability—from design through commissioning and into lifecycle maintenance.

This post is a “throwback” with a forward-looking intent: what the event signals for engineering leaders who need to reduce risk, accelerate delivery, and make acceptance decisions defensible under real operational scrutiny.

1) The Core Message: Test to Prove, Not Just to Pass

A recurring theme across technical conversations was the shift from compliance testing to system-behavior testing.

- Compliance testing answers: “Does it meet the stated requirement?”

- System-behavior testing answers: “Does it behave correctly under realistic network and operational conditions—and can we prove it?”

From an executive standpoint, this matters because it directly impacts:

- schedule predictability (fewer late discoveries in SAT),

- rework reduction (less “it worked in the lab” disconnect),

- auditability (clear technical records supporting acceptance),

- operational risk (fewer ambiguous failure modes after energization).

2) Digital Substations & IEC 61850: Interoperability That Stands Up in the Field

IEC 61850 is no longer treated as a “check-the-box” standard. The practical demand is for interoperability with verifiable, network-level evidence.

What “interoperability in the real world” tends to require:

- alignment between engineering intent (SCL/SCD) and observed traffic,

- objective confirmation of publisher/subscriber behavior,

- clear validation of timing, quality attributes, and dataset integrity,

- a test approach that produces repeatable outcomes across teams and test windows.

In short: “connected” is not the same as “validated.”

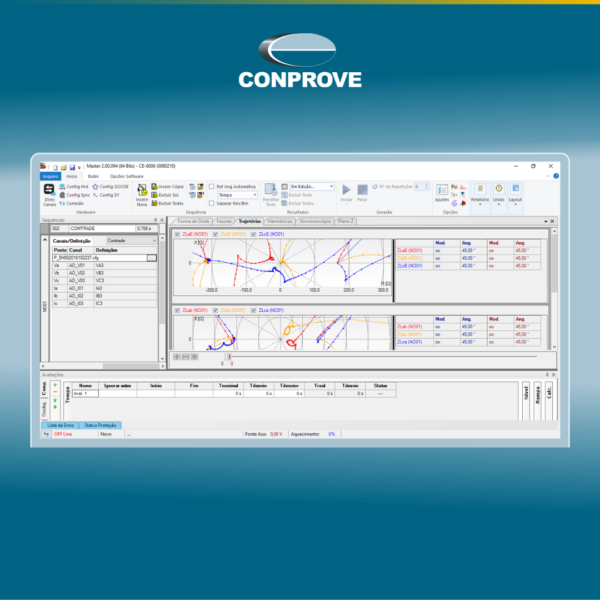

3) GOOSE and Sampled Values: Validation as a Discipline, Not a Final Step

GOOSE and SV discussions consistently pointed to the same operational pain: when validation is treated as a late-stage task, the cost of issues rises sharply.

A more mature posture is to treat networked messaging validation as continuous across the project lifecycle:

- Engineering / FAT: confirm configuration, expected behavior, and testability

- SAT / Commissioning: validate performance under site constraints and real topology

- Operations & Maintenance: support troubleshooting with consistent artifacts (captures, logs, reports)

This aligns with a governance model where acceptance isn’t a single moment—it’s a chain of evidence.

4) IBRs, WAMPACS, and VPACs: Raising the Bar on Performance Criteria

The growing impact of IBRs (Inverter-Based Resources) and broader WAMPACS/VPACs discussions highlight a key reality: modern systems demand measurable performance, not just correct logic.

That typically translates into:

- tighter definitions of what “correct response” means under dynamic conditions,

- more explicit acceptance criteria tied to time, accuracy, determinism, and observability,

- stronger expectations for tests to be reproducible and comparable across assets and sites.

For leadership teams, the implication is clear: requirements must become verifiable performance targets, otherwise acceptance becomes subjective—and troubleshooting becomes expensive.

5) What This Means for Project Delivery and Governance

Across projects, the biggest efficiency gains tend to come from standardizing three things:

a) Evidence Packages

Define what “good” looks like for acceptance:

- network captures (where applicable),

- device logs,

- test reports with clear pass/fail criteria,

- configuration baselines and version traceability.

b) Repeatable Test Procedures

If two teams run the same test, results should be comparable:

- consistent test setup descriptions,

- controlled parameter sets,

- documented assumptions and limitations.

c) Traceability End-to-End

Connect: requirement → engineering design → configuration → test execution → evidence → acceptance → maintenance reference

This is how organizations reduce “tribal knowledge” dependency and protect schedule certainty.

Questions Worth Asking (and Standardizing Internally)

- In your environment, what consumes the most time today: interoperability, field validation, or fast troubleshooting?

- What do you consider “sufficient evidence” for acceptance: captures, logs, test reports, or a complete package?

- Do your projects follow a consistent evidence standard—or does each delivery reinvent the format?

Conclusion: The Industry Is Moving Toward Defensible Acceptance

The most consistent DPSP 2026 takeaway was not a feature trend—it was a quality standard: acceptance must be defensible, repeatable, and traceable. Organizations that operationalize this mindset typically see fewer commissioning surprises, faster root-cause analysis, and stronger confidence in handover decisions.